- My conductivity meter is not communicating with PC-Titrate. What can I do to troubleshoot?

Checking the interface communication and software settings is a good place to start when troubleshooting a conductivity meter which has lost communication.

- What is Gran analysis and why is Gran alkalinity important?

Gran analysis is an effective alternative method for endpoint and pKa detection. Using a series of mathematical manipulations, standard titration curves are transformed into linear data called ‘Gran functions’. Endpoints and pKa’s are determined by performing a linear regression on these functions. In many cases, Gran analysis provides a more accurate endpoint, or identifies endpoints not evaluated in Standard Methods.

- What is alkalinity and how is it speciated into carbonate, bicarbonate and hydroxide fractions?

Alkalinity is the buffering capacity of a solution. It is a valuable water quality parameter used for many applications, including, but not limited to: drinking water treatment, domestic and industrial wastewater treatment, swimming pools, food and beverage, soil, agriculture, and other environmental testing.

The main compounds of alkalinity are: hydroxides (OH -), carbonates (CO3 2-), and bicarbonates (HCO3 -). The buffering capacity of a solution depends on the absorption of positively charged hydrogen ions by negatively charged bicarbonate and carbonate molecules. When bicarbonate and carbonate molecules absorb hydrogen ions, there is a shift in equilibrium without a significant shift in pH. A sample with high buffering capacity will have high bicarbonate and/or carbonate content, and a greater resistance to changes in pH.

MANTECH complies with EPA Method 310.1, ASTM Method D 1067-92, and Standard Method 2320, for determining alkalinity, which calculates the concentrations of OH -, CO3 2-, and HCO3 -. Total alkalinity is calculated as the sum of these three species and is expressed in units of milligrams of calcium carbonate per litre (mg CaCO3/L).

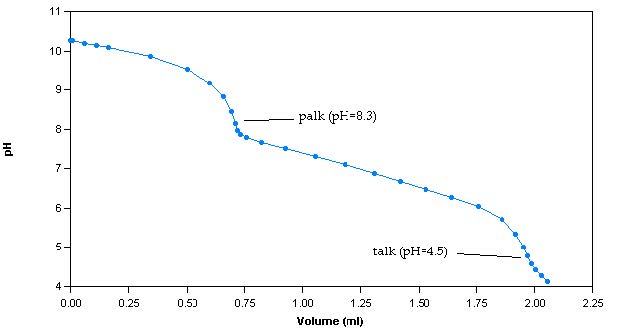

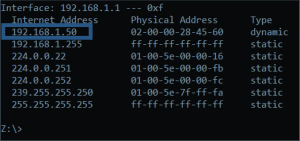

The alkalinity method involves titrating samples with a titrant of known concentration, commonly, 0.02N sulphuric acid (H2SO4), to pH endpoints of 8.3 and 4.5. The alkalinity calculated up to endpoint 8.3 is classified as phenolphthalein alkalinity (palk), whereas the alkalinity calculated up to endpoint 4.5 is classified as total alkalinity (talk). A titration curve is generated by plotting pH versus volume of dispensed titrant (Figure 1).

Figure 1: Sample titration curve for a 100ppm alkalinity sample

The titration curve is used to determine the volume of dispensed titrant at each endpoint. To calculate palk, the following equation is used:

𝑝𝑎𝑙𝑘=𝑥𝑣𝑜𝑙(8.3)× 𝑡𝑐𝑜𝑛×50 000/𝑠𝑣𝑜𝑙

Where:

𝑥𝑣𝑜𝑙(8.3)=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 𝑢𝑠𝑒𝑑 𝑡𝑜 𝑡𝑖𝑡𝑟𝑎𝑡𝑒 𝑡𝑜 𝑝𝐻 8.3 (𝑚𝐿)

𝑡𝑐𝑜𝑛=𝑛𝑜𝑟𝑚𝑎𝑙𝑖𝑡𝑦 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡(𝑁) 50 000= 𝑒𝑞𝑢𝑖𝑣𝑎𝑙𝑒𝑛𝑡 𝑤𝑒𝑖𝑔ℎ𝑡 𝑜𝑓 𝐶𝑎𝐶𝑂3 𝑎𝑠 𝑑𝑒𝑓𝑖𝑛𝑒𝑑 𝑖𝑛 𝑆𝑡𝑎𝑛𝑑𝑎𝑟𝑑 𝑀𝑒𝑡ℎ𝑜𝑑𝑠 (𝑚𝑔/𝑚𝑜𝑙)

𝑠𝑣𝑜𝑙=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑠𝑎𝑚𝑝𝑙𝑒 𝑡𝑖𝑡𝑟𝑎𝑡𝑒𝑑 (𝑚𝐿)

talk is calculated using the following equation:

𝑡𝑎𝑙𝑘= 𝑥𝑣𝑜𝑙(4.5)× 𝑡𝑐𝑜𝑛× 50 000/𝑠𝑣𝑜𝑙

Where:

𝑥𝑣𝑜𝑙(4.5)=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 𝑢𝑠𝑒𝑑 𝑡𝑜 𝑡𝑖𝑡𝑟𝑎𝑡𝑒 𝑡𝑜 𝑝𝐻 4.5 (𝑚𝐿)

𝑡𝑐𝑜𝑛=𝑛𝑜𝑟𝑚𝑎𝑙𝑖𝑡𝑦 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 (𝑁)

50 000= 𝑒𝑞𝑢𝑖𝑣𝑎𝑙𝑒𝑛𝑡 𝑤𝑒𝑖𝑔ℎ𝑡 𝑜𝑓 𝐶𝑎𝐶𝑂3 𝑎𝑠 𝑑𝑒𝑓𝑖𝑛𝑒𝑑 𝑖𝑛 𝑆𝑡𝑎𝑛𝑑𝑎𝑟𝑑 𝑀𝑒𝑡ℎ𝑜𝑑𝑠 (𝑚𝑔/𝑚𝑜𝑙)

𝑠𝑣𝑜𝑙=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑠𝑎𝑚𝑝𝑙𝑒 𝑡𝑖𝑡𝑟𝑎𝑡𝑒𝑑 (𝑚𝐿)

For samples with talk less than 20ppm, a low alkalinity titration procedure requires the sample to be titrated to a pH of 4.3, as per Standard Method 2320. To calculate talk of low alkalinity samples, the following equation is used:

𝑡𝑎𝑙𝑘= (2 × 𝑥𝑣𝑜𝑙(4.5)−𝑥𝑣𝑜𝑙(4.2)) × 𝑡𝑐𝑜𝑛× 50 000/𝑠𝑣𝑜𝑙

Where:

𝑥𝑣𝑜𝑙(4.5)=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 𝑢𝑠𝑒𝑑 𝑡𝑜 𝑡𝑖𝑡𝑟𝑎𝑡𝑒 𝑡𝑜 𝑝𝐻 4.5 (𝑚𝐿)

𝑥𝑣𝑜𝑙(4.2)=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 𝑢𝑠𝑒𝑑 𝑡𝑜 𝑡𝑖𝑡𝑟𝑎𝑡𝑒 𝑡𝑜 𝑝𝐻 4.2 (𝑚𝐿)

𝑡𝑐𝑜𝑛=𝑛𝑜𝑟𝑚𝑎𝑙𝑖𝑡𝑦 𝑜𝑓 𝑡𝑖𝑡𝑟𝑎𝑛𝑡 (𝑁)

50 000=𝑒𝑞𝑢𝑖𝑣𝑎𝑙𝑒𝑛𝑡 𝑤𝑒𝑖𝑔ℎ𝑡 𝑜𝑓 𝐶𝑎𝐶𝑂3 𝑎𝑠 𝑑𝑒𝑓𝑖𝑛𝑒𝑑 𝑖𝑛 𝑆𝑡𝑎𝑛𝑑𝑎𝑟𝑑 𝑀𝑒𝑡ℎ𝑜𝑑𝑠 (𝑚𝑔/𝑚𝑜𝑙)

𝑠𝑣𝑜𝑙=𝑣𝑜𝑙𝑢𝑚𝑒 𝑜𝑓 𝑠𝑎𝑚𝑝𝑙𝑒 𝑡𝑖𝑡𝑟𝑎𝑡𝑒𝑑 (𝑚𝐿)

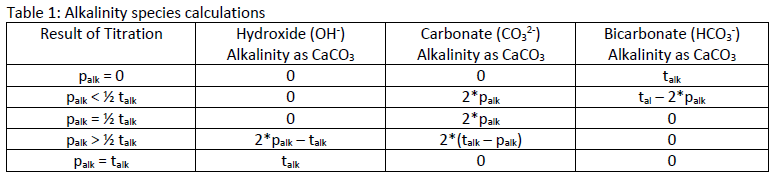

The values for talk and palk can be used to determine the concentrations of OH-, CO32, and HCO3-, and indicate the following conditions:

1. OH – alkalinity is present if palk is greater than half of the talk

2. CO3 2- alkalinity is present when palk is not zero, but is less than talk

3. HCO3 – ions are present if palk is less then half the talkTable 1 shows the calculations for determining the concentration of each species of alkalinity based on the results for palk and talk.

Table 1: Alkalinity species calculationsMANTECH’s MT Series automates alkalinity and low alkalinity measurements, following EPA, ASTM, ISO and Standard Methods. For more information on how MANTECH can simplify your alkalinity analysis, refer to the MT Series product info page or contact your local MANTECH distributor.

For more information on MANTECH’s method for automated alkalinity measurement and how the species of alkalinity are calculated, download the pdf.

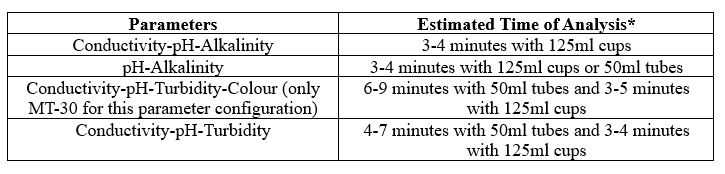

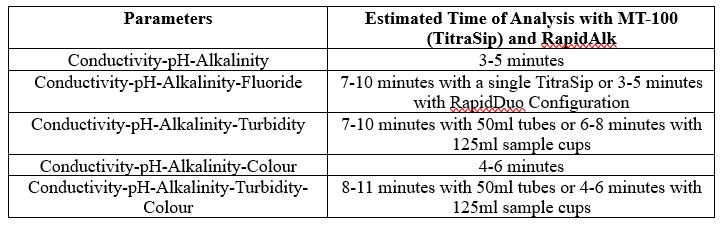

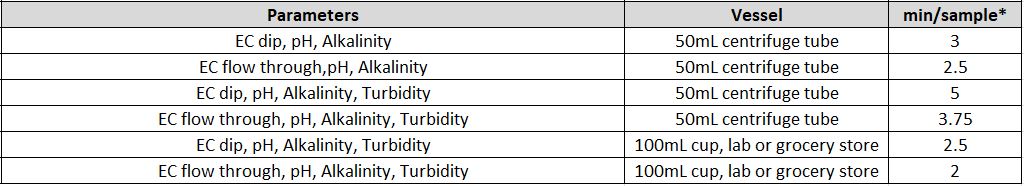

- What is the time of analysis per parameter and for multi-parameter analysis?

The timing for analysis of different parameters depends on several different factors, like the concentration of the sample and the equipment used.

Another factor is the total available sample volume in the vessel on the AutoMax Autosampler bed. With some combinations of parameters, larger sample volume allows for simultaneous measurements, resulting in faster total analysis time than sequential measurement. A larger sample volume may require a larger sample cup, which decreases capacity on the same model Autosampler. For example, an AM73 can accommodate 73x50ml tubes, or 30x125ml cups. In some, the cases the same set of parameters can be analyzed in 2-5 minutes faster when using the 125ml cups vs 50ml tubes.

It is important to understand your requirements in terms of capacity and throughput speed, total batch capacity required and/or daily sample loads and if overnight, unattended analyses will be applicable.

Individual Parameters

Combined Parameters

MT-10 & MT-30 Models

* If alkalinity is combined with turbidity and/or colour an MT-100 System must be quoted

MT-100 Model

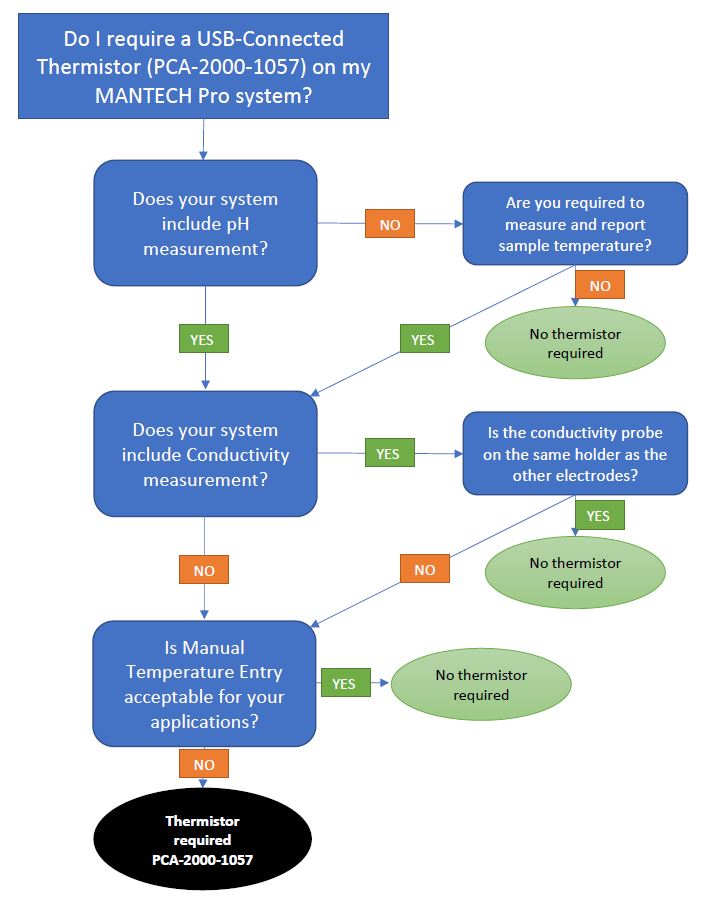

- How do MANTECH systems measure and record temperature?

The standard method for measuring temperature is with a USB-connected stainless steel thermistor probe. The specifications for this probe are below:

- Communication: USB controlled by MANTECH Pro Software

- Sheath Material: Super OMEGACLAD XL/ Stainless Steel/Inconel

- Temp Range: 0 to 1038 Degree C.

- Diameter: 0.062in

- Length: 6 inch

- Measurement to 3 decimal places

- Autocalibration feature in MANTECH Pro software

Alternatively, when measuring conductivity, one can measure the temperature directly from the conductivity probe. This temperature is also displayed directly on the conductivity meter screen.

MANTECH has the ability to use either of these measurement methods for pH temperature compensation, depending on customer preference and system configuration.

MANTECH temperature sensors are available in both PT1000 and 10kNTC styles. PT1000 sensors have a linear positive-slope relationship between resistance and temperature with a resistance of 1000 ohms at 0°C, and 10kNTC sensors have a curved negative-slope relationship between resistance and temperature with a resistance of 10,000 ohms at 25°C. Both these models of temperature sensor perform well and comparably within the 0-100°C temperature range that liquid samples exist in. If you require a specific type of temperature sensor, please feel free to let MANTECH know and we will accommodate.

- Why do I need to standardize my NaOH titrant?

NaOH is highly hygroscopic, meaning that it absorbs water from the air. Therefore, over time the titrant will become more dilute as it absorbs water. NaOH can be standardized by titrating into a sample of potassium hydrogen phthalate (KHP) of known concentration. For example, titrate 0.05 N KHP with 0.1 N NaOH to an endpoint, and using the volume of NaOH added, the precise concentration of NaOH can be calculated. For more information on standardizing NaOH titrant, please refer to Standard Methods 2310. To limit the amount of water being absorbed, a glass drying tube with a cotton ball inserted should be used to prevent moisture going into the tube.

- Why is the pH electrode slope and measuring characteristics different for higher pH values, for ex pH 13?

Changes to slope at higher pHs

Alkaline Error or Sodium Error occurs when pH is very high (e.g. pH 12) because Na+ concentration is high (from NaOH used to raise pH) and H+ is very low.

Electrodes respond slightly to Na+ and give a false low reading. This is related to the concept of selectivity coefficients where the electrode responds to many ions but is most selective for H+. This problem occurs because Na+ is 10 orders of magnitude higher than H+ in the solution.

High pH electrodes use a 0-14 pH glass. This electrode will read pH 14 (1 M NaOH) to be around pH 13.7 with a 0.3 pH sodium error.

A standard pH electrode uses a 0-12 pH glass. The electrode will read pH 14 (1 M NaOH) to be around pH 12.4 with a 1.6 pH sodium error.

Alkaline error

The alkaline effect is the phenomenon where H+ ions in the gel layer of the pH-sensitive membrane are partly or completely replaced by alkali ions. This leads to a pH measurement which is too low in comparison with the number of H+ ions in the sample. Under extreme conditions where the H+ ion activity can be neglected the glass membrane only responds to sodium ions. Even though the effect is called the alkaline error, it is only sodium or lithium ions which cause considerable disturbances. The effect increases with increasing temperature and pH value (pH > 9), and can be minimized by using a special pH membrane glass.

Sodium Ion Error

Although the pH glass measuring electrode responds very selectively to H+ ions, there is a small interference caused by similar ions such as lithium, sodium, and potassium. The amount of this interference decreases with increasing ion size. Since lithium ions are normally not in solutions, and potassium ions cause very little interference, Na+ ions present the most significant interference.

Sodium ion error, also referred to as alkaline error, is the result of alkali ions, particularly Na+ ions, penetrating the glass electrode silicon‐oxygen molecular structure and creating a potential difference between the outer and inner surfaces of the electrode. H+ ions are replaced with Na+ ions, decreasing the H+ ion activity, thereby artificially suppressing the true pH value. This is the reason pH is sometimes referred to as a measure of the H+ ion activity and not H+ ion concentration.

Na+ ion interference occurs when the H+ ion concentration is very low and the Na+ ion concentration is very high. Temperature also directly affects this error. As the temperature of the process increases, so does the Na+ ion error.

Depending on the exact glass formulation, Na+ ion interference may take effect at a higher or lower pH. There is no glass formulation currently available that has zero Na+ ion error. Since some error will always exist, it is important that the error be consistent and repeatable. With many glass formulations, this is not possible since the electrode becomes sensitized to the environment it was exposed to prior to experiencing high pH levels. For example, the exact point at which the Na+ ion error of an electrode occurs may be 11.50 pH, after immersion in tap water, but 12.50 pH after immersion in an alkaline solution.

Controlled molecular etching of special glass formulations can keep Na+ error consistent and repeatable.

This is accomplished by stripping away one molecular layer at a time. This special characteristic provides a consistent amount of lithium ions available for exchange with the hydrogen ions to produce a similar millivolt potential for a similar condition.

- What is the sample capacity for each AutoMax Sampler based on available tube and cup styles?

Each of our AutoMax samplers are compatible with a variety of common sample cup and tube styles to accommodate up to 4 probes. To view available sample vessels and AutoMax sample capacities, read our technical bulletin here.

- What should I do if my titration standard is measuring too high or too low?

If your titration standards are not reading the correct concentrations, for example, the alkalinity standard reading is low, first make sure the titrant has been standardized. Secondly, the precision of the results can indicate if this is a mechanical or chemical problem. If the results are precise, it is likely a chemical issue. Check your standards and titrant standardization. It is also possible that the sample volume may be incorrect.

- What is the minimum total liquid volume that can be measured for pH, conductivity and alkalinity with the MT Systems?

When using 50mL sample tubes, the minimum total volume can be as small as 6 ml using the TitraPro4 pH electrode and the MANTECH 5mm conductivity probe. This is due to the lower immersion depth of these electrodes and precise autosampler coordinate specifications. When using 125mL sample cups, the minimum total volume can be as small as 15mL.

- What is the difference between 2-pole and 4-pole conductivity probes?

4-pole sensors have a much broader linear measurement range and are not sensitive to contamination.

- How does MANTECH account for temperature compensation and correction in conductivity measurements?

Conductivity is a temperature dependent measurement. All substances have a conductivity coefficient which varies from 1% per °C to 3% per °C for most commonly occurring substances. The automatic temperature compensation on the MANTECH Conductivity meter defaults to 1.91% per °C, this being adequate for most routine determinations.

Temperature-corrected Conductivity is calculated by:

- Subtract the current temperature of your standard from 25°C (or whichever reference temperature applies).

- Multiply the result by 1.91% which is your default temperature coefficient.

- Multiply the result by the uncorrected conductivity value.

- Add the result to the uncorrected conductivity value. If the sample temperature is higher than the reference temperature, the result of step 1, 2, and 3 are negative numbers so it is a subtraction from the uncorrected conductivity value.

- The result is the corrected conductivity value.

Example: Uncorrected conductivity value is 1200uS, current temperature is 21.4°C, reference temperature is 25°C, default correction factor of 1.91%

- 25.0 – 21.4 = 3.6

- 3.6 * 0.0191 = 0.06876

- 0.06876 * 1200 = 82.51

- 1200 + 82.51 = 1282.51uS <— Temperature Corrected Value for Reference Temperature 25°C

Conductivity readings varying with temperature may be due to the substances under test having a coefficient other than the typical value of 1.91% per °C. To eliminate this variation it is necessary to maintain all samples at the reference temperature by use of a thermostatic water bath or equivalent.

Adjustment may be made by entering the 4510 conductivity meter SETUP menu and selecting COEFF. The reading can then be adjusted to the required value (0.00 to 4.00) by using the keypad. A setting of 0.00 will mean that there is no temperature compensation being applied.

- How is a Method Detection Limit (MDL) defined?

A Method Detection Limit (MDL) is defined in slightly differring ways by the US EPA, and the APHA. The definitions are briefly described below:

MDL as per US EPA:

The method detection limit (MDL) is defined as the minimum measured concentration of a substance that can be reported with 99% confidence that the measured concentration is distinguishable from method blank results.MDL as per APHA:

(MDL) is defined as the constituent concentration that, when processed through the entire method, produces signal that has a 99% probability of being different from the blank. - How do I remove my electrode for maintenance?

Most MANTECH electrodes are connected to the Interface module via a BNC cable with a detachable S7 connection. This S7 connection is located at the electrode cable junction, allowing for easy detachment from the cable, and removal of the electrode while leaving the cable in place. This applies to all electrodes except the Ammonia Electrode and all Conductivity electrodes. See below for pictures of a pH electrode with the S7 connection attached, and detached.

- Why is my conductivity analysis reporting negative values?

The reason you may have negative conductivity values at the low end is that there is a PC-Titrate software calibration being applied on the raw conductivity values from the meter. They are not wrong and it means the value is zero (0). You see this often with DI water measurements. If you turned off the software calibration then you would get the exact same values as displayed on the conductivity meter.

- How do I change the buret IP address?

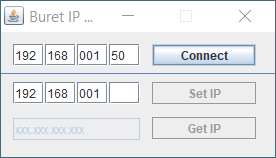

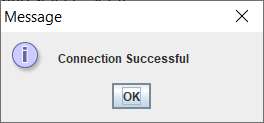

MANTECH Burets have a static IP address assigned to them. These addresses can be changed if there are communication issues between the Buret and the software. To avoid IP address conflicts, MANTECH recommends that all Burets on the same network have unique IP addresses, not just ones on the same system. If an IP address needs to be changed, then you can manually change it using the following steps:

1.Open the Network and Sharing Centre on your computer through the advanced network settings, or through searching on the Control Panel.

2. Open Command Prompt on your computer by searching it in your Windows Search Engine.

3. Extract this folder to your computer.

4. Plug in the burette. In the Network and Sharing Centre, find the active network for the buret (ethernet connection) and open the properties by selecting the blue “Ethernet”. Open the properties.

5. Internet Protocol Version 6 should be unselected, and Internet Version 4 should be selected. Click OK and open the Details for the ethernet status. The Value for IPv4 Address should be 192.168.1.1. If not, change the IP address in the Internet Protocol Version 4 properties.

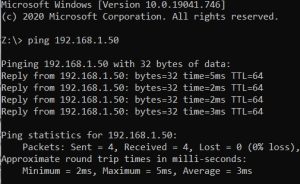

6. In Command Prompt, type in ping and the IP address found on the back of the buret and then the enter key. For example, “ping 192.168.1.50” without the quotations and the enter key. The

buret should reply.7. Open the Buret_IP_Change folder now on your computer and open the application that is in the bin

subfolder.8. Type in the IP address and “Connect”. Click OK. Then type in the new IP address and select “Set IP”.

9. In Command Prompt again, ping the new IP address to ensure it works.

Specific notes:

- If the IP address is not known, then in command prompt, type in “arp -a” without the quotes and the IP address for the buret will be given as the first line under the IPv4 Address Value. This can be done after Step 5.

- If using PC-titrate, ensure that the version used is 889 or later. Previous versions do not support the ethernet Burets without an upgrade. Contact [email protected] for the ethernet buret software upgrade.

- If using MANTECH Pro, you can find the IP address of the buret (if it is not the one already in the address line) in hardware configuration using the “Configure Adapter”. The software will find the IP address that works for that buret and can be used to test the ping as well for buret response.

- What are the benefits of IntelliRinse?

IntelliRinse™ is beneficial because it uses real-time measurement to provide confirmation that your probes are 100% clean, eliminating chances of cross-contamination.

Active IntelliRinse™ systems allows users to set user-defined values (e.g. rinse to a specific conductivity value) or to adjust the rinse intensity based on previous sample concentrations before moving to the next sample. This is beneficial as it improves the rinsing of probes/electrodes to ensure that cross-contamination does not occur even with particularly dirty or high-strength samples. There is also the option to have the final value measured during rinsing shown in a column on the results report, for a visual confirmation directly with sample results.

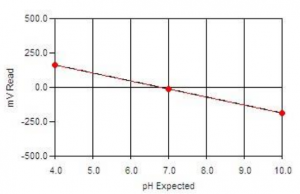

- What are the Different Types of Calibration Profiles?

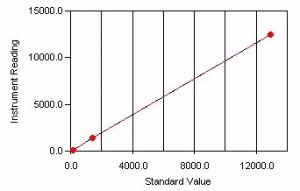

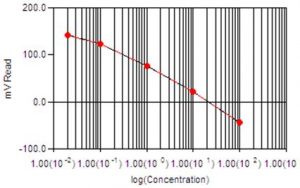

Calibrations can be linear, logarithmic, single-line, and multi-line fit.

The calibration method used depends on the method required.

pH and Color calibrations are linear, single-line fit calibrations.

Conductivity and Turbidity calibrations are multi-line, linear type calibrations.

ISE (Fluoride, Chloride, Ammonia, etc.) use a logarithmic multi-line calibration type.

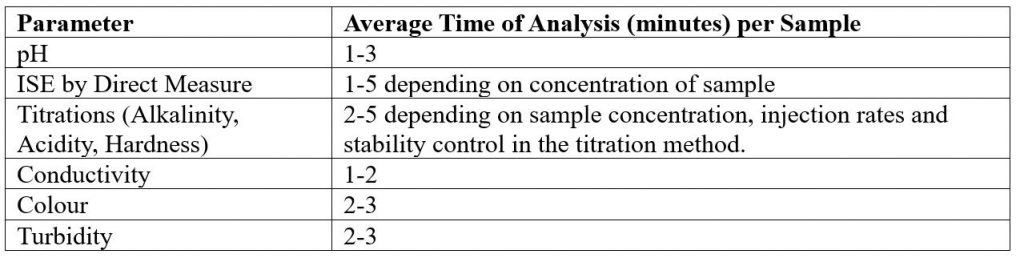

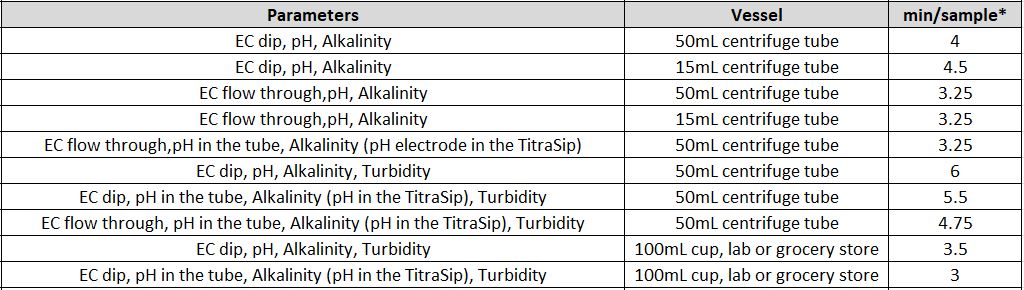

- What is the Time of Analysis for Different Parameters?

The timing for analysis of different parameters depends on a few different factors, like the concentration of the sample and the equipment used.

Another factor is the total available sample volume in the vessel on the AutoMax Autosampler bed. With some combinations of parameters, larger sample volume allows for simultaneous measurements, resulting in faster total analysis time than sequential measurement. A larger sample volume may require a larger sample cup, which decreases capacity on the same model Autosampler. In some, the cases the same set of parameters can be analyzed in 2-5 minutes faster when using the 125ml cups vs 50ml tubes.

It is important to understand your customers’ requirements in terms of capacity and throughput speed, total batch capacity required and/or daily sample loads and if overnight, unattended analyses will be applicable.

*** Note that all times of analysis are halved if Dual-Analysis option is included with system***

Individual Parameters

Combined Parameters

MT-10 & MT-30 Models

MT-100 Model

* Alkalinity titrations may take between 1-3 minutes depending on sample concentration. Times estimated assuming 50ppm alkalinity concentration.

EC = Electrical Conductivity

- How do I clean my conductivity probe?

For water soluble contaminants, rinse probe in deionized (DI) water. If ineffective, soak probe in warm DI water with household detergent for 15 – 30 minutes.

For oil-based contaminants, rinse probe in ethanol or acetone for short (5-minute) periods.

After cleaning, rinse probe in DI water to remove residual cleaning reagents. Perform a meter calibration before proceeding with sample analysis.

- What is the wavelength measured for Turbidity by Standard Methods (White Light)?

Standard Method 2130B (Nephelometric Method) specifies that a laboratory or process nephelometer should have a detector system with a spectral peak response of 400 to 600 nm. MANTECH’s T10 Turbidity meter and automated turbidity applications conform to this requirement.

- What is the recommended type of standard for automated Turbidity Calibrations and Quality Control?

MANTECH recommends the use of turbidity standards made with suspensions of microspheres of styrene-divinylbenzene copolymer for all turbidity applications. Standard Methods dictate that “Secondary standards made with suspensions of microspheres of styrene-divinylbenzene copolymer typically are as stable as concentrated formazin and are much more stable than diluted formazin.”

Any dilutions used to prepare turbidity standards, as well as blanks, must use degassed DI water. This is especially important for accurate low-end turbidity analysis, as there is often a positive bias due to bubbles introduced into the DI water solution when it is being produced/dispensed. This effect becomes more noticeable with lower NTU readings, and can cause challenges with performing an accurate calibration. To degas DI water, it must be dispensed into a very clean bottle or container, and left to sit for ~24h. You will see bubbles form on the side of the container as the water degasses. After degassing, it is ready to use for blanks and standards preparation.

- Do I need a stirrer for my conductivity measurements?

MANTECH utilizes rapid dipping of conductivity probes into sample tubes to mix samples and ensure a stable analysis of conductivity from the probe. This style of mixing is applicable to systems featuring a 12mm conductivity probe dipping into 50mL sample tubes, and systems featuring a 5mm conductivity probe dipping into 15mL sample tubes.

For all other combinations of probe and sample vessel, a stirrer is required to ensure proper mixing is achieved.

- How does MANTECH account for temperature compensation and correction in pH measurements?

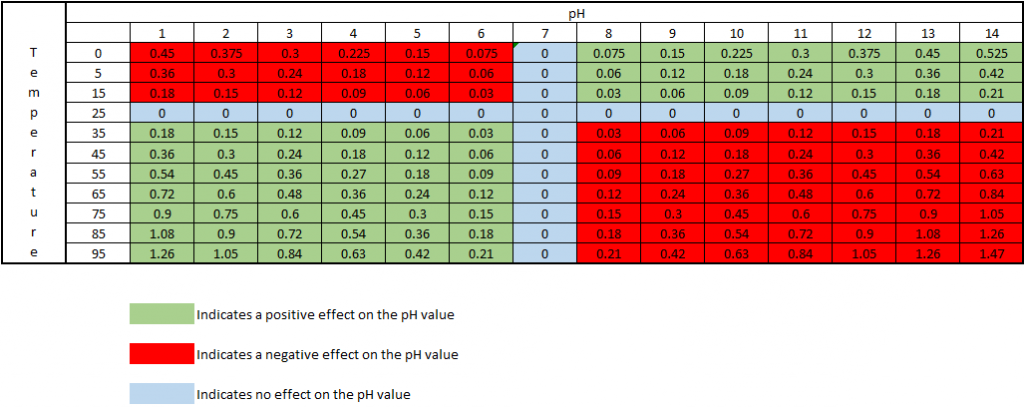

As the temperature of a solution changes, the actual pH changes. This is not an error of the probe or meter being used, but is the actual pH of the solution at that particular temperature. The temperature effect on the pH value is 0.003 pH units per oC away from 25oC, per pH units away from pH 7. This effect can be either negative or positive, depending on if the temperature is above or below 25oC, and if the pH is above or below pH 7. At 25oC and pH 7, there is no change in the pH value.

The chart below shows how the actual pH changes with temperature and pH, which allows you to correct the pH reading to 25oC. As an example, if a sample measured pH 5 at a temperature of 5oC, the chart indicates this a negative effect, therefore the sample pH would be 5 – 0.12 = 4.88pH corrected to 25oC:

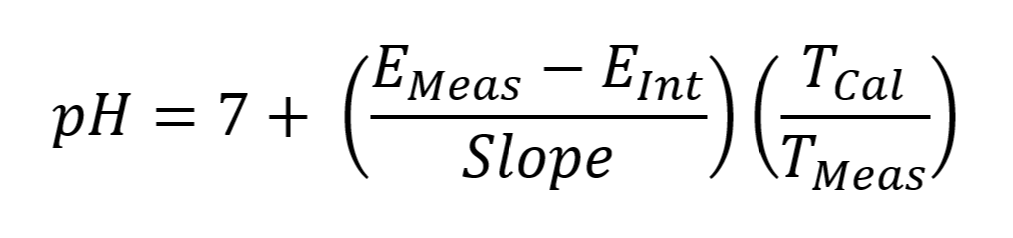

MANTECH software accounts for temperature by recording the temperature during calibration via a thermistor probe, then it corrects the pH reading at the time of measurement to account for the difference in measured temperature of the sample vs. the calibration buffers. The reported pH value is the corrected value @ the sample temperature. The equation below shows how the PC-Titrate software calculates pH:

EMeas = Voltage measure by electrode at the time of titration (mV)

EInt = Voltage of the Intercept value calculated from the calibration equation. (mV)

Slope = slope of the line calculated from the calibration equation. (mV)

TMeas = temperature measured at the time of pH measurement (K degrees)

TCal = temperature taken at the time of last calibration. (K degrees)

MANTECH also offers the option to report pH values corrected to a set temperature, such as 25°C. This is implemented in the software as an additional step after the sample pH is recorded. MANTECH offers this as a feature for new MT-Series systems, or as an upgrade to existing MANTECH systems.

For more information please follow this link or contact us at [email protected].

- How do I clean my color flow cell?

For optimal performance the flow cell should be cleaned from once a year to once a month, depending on the type of samples being analysed. Manually pump 50 ml of 0.1 N Hydrochloric Acid into the color module and leave the cell to soak overnight. In the morning, pump 100 ml of deionized water through the module to rinse the cell. This process can be automated to occur after every rack of samples run.

- How do I store my MANTECH Electrodes?

MANTECH recommends storing pH and other electrodes immersed in a 1:10 dilution of pH 4 buffer in Tap Water. Conductivity electrodes can be stored in air for system configurations that do not place it on the same holder as another electrode.

For MANTECH’s ion-selective electrodes, MANTECH recommends each ion should be stored in a dilute solution of the ion that it measures. For example, store your chloride electrode in a solution of 1.0 x 10-2 M chloride solution.

For a full list of electrodes and their recommended storage, please click here.

- What is the chemical compatibility of the tubing in MANTECH pumps?

The tubing used in MANTECH peristaltic and metering pumps is compatible with many types of chemicals. The following is a list of chemicals that are not recommended to be used with MANTECH tubing/pumps.

*Chemicals without listed concentrations are assumed to be 100% concentrations/the maximum percent solubility in water.

Acetaldehyde Ethyl Benzoate Methyl Methacrylate Acetone Ethylene Bromide Mineral Oil Aliphatic Hydrocarbons Fluoboric Acid, 48% in w Mineral Spirits Amyl Alcohol Fluorine Gas Motor Oil Aqua Regia Formaldehyde, 37% in w Naphtha Aromatic Hydrocarbons Fuel Oil Naphthalene ASTM Reference No. 2 Oil Furfural Nitric Acid, 68-71% in w ASTM Reference No. 3 Oil Gasoline, Automotive Nitrobenzene Benzaldehyde Heptane Nitromethane Benzene Hexane Oils, Essential Benzenesulfonic Acid Hydrobromic Acid, 20 -50% in w Oils, Hydraulic (Phosphate Ester) Bromine, Anhydrous Liquid Hydrobromic Acid, 100% in w Oils, Hydrocarbon Butyl Alcohol Hydrofluoric Acid, 10% in w Ortho Dichlorobenzene Carbon Disulfide Hydrofluoric Acid, 25% in w Paraffins Carbon Tetrachloride Hydrofluoric Acid, 40 -48% in w Picric Acid, 1% in w Chlorine, Wet Gas Isooctane Skydrol 500A Chlorobenzene, Mono, Di, Tri Jet Fuel, JP8 Styrene Monomer Chloroform Kerosene Sulfur Chloride Chlorosulfonic Acid Ketones Sulfuric Acid, 95-98% in w Cresol (m, o, or p) Lemon Oil Tetrahydrofuran Cyclohexane Limonene-D Toluene Cyclohexanone Lubricating Oils, Petroleum Trichloroethylene Diesel Fuel Methyl Ethyl Ketone (MEK) Turpentine Dioxane Methyl Isobutyl Ketone Xylene - How do I prepare my pH electrode for long-term storage or shipping?

To safely store or ship your MANTECH pH electrode, first fill the electrode with fill solution and fill the storage boot ¾ full with fill solution. Then, insert the probe into the boot and screw tight. Place the fill solution plug in its hole and parafilm over the plug, as well as around the body. Then, parafilm over the cable connection spot, making sure not to overlap with the parafilm around the plug.

- How do I replace the membrane of my ammonia ion selective electrode (ISE)?

Watch this short and informative demonstration on how to replace the membrane on an Orion ammonia ion selective electrode (ISE).

- What is the measuring range for Dissolved Oxygen on MANTECH systems?

MANTECH systems utilize one of two dissolved oxygen probes from YSI for automated dissolved oxygen determination. The measuring ranges of both probes are listed below, along with a link to the complete specification sheets.

YSI FDO 4410 IDS Sensor

- Dissolved Oxygen Range: 0 to 20 mg/L

- Air Saturation Range: 0 to 200%

- Temperature Measurement Range: 0 to 50°C

- Technical Specifications

YSI ProOBOD Sensor

- Dissolved Oxygen Range: 0 to 50 mg/L

- Air Saturation Range: 0 to 500%

- Temperature Measurement Range: Ambient 10 to 40°C; Compensation -5 to 50°C

- Technical Specifications

- What is the method for Hot Acidity determination?

Hot acidity is a variation of the standard Acidity titration using NaOH, with some pretreatment steps performed before the titration. It is outlined in Standard Methods SM2310B, officially called “hot peroxide treatment procedure for acidity determination”. This method is applicable to mine wastes and other samples containing large amounts of metals such as heavy industrial WW.

From SM2310B:

- Use the hot peroxide procedure to pretreat samples known or suspected to contain hydrolysable metal ions or reduced forms of polyvalent cation, such as iron pickle liquors, acid mine drainage, and other industrial wastes.

- Hot peroxide treatment acidity method:

- Pipet sample volume into vessel

- Measure pH

- If pH > 4.0, add small increments of 0.02N H2SO4 to reduce pH to 4.0 or less

- Remove samples from MANTECH system

- Add 5 drops of 30% H2O2 and boil for 2 to 5 minutes

- Cool to room temperature

- Place samples back on MANTECH system and titrate with standard alkali (NaOH) to pH 8.3

- The calculation for hot acidity is shown below:

- Acidity = [((mL NaOH consumed)*(Normality of NaOH)) – ((mL H2SO4 consumed)*(Normality of H2SO4))*50,000]/Sample volume

- What is Hardness?

‘Hardness’ is defined as the total concentration of alkaline earth ions (Ca2+, Mg2+, Sr2+ and Ba2+) in water. The Ca2+ and Mg2+ ions dominate the alkaline earth ions. Therefore, one can refer to total hardness as the total concentration of the Ca2+ and Mg2+ ions in solution. Hardness is expressed as mg CaCO3/L water sample (ppm CaCO3). Read MANTECH’s hardness method abstract here.

- What is Ammonia?

Ammonia is a colourless gas that is soluble in water and has a distinctive odour. It is a mild environmental hazard because of its toxicity and ability to remain active in the environment. Direct measurement of ammonia using a calibrated ion selective electrode (ISE) is a quick, accurate and precise way to easily determine ammonia levels. Read MANTECH’s ammonia method abstract here.

- What is Salinity?

There are two types of salinity, absolute salinity and practical salinity. Absolute salinity is a ratio between the mass of dissolved material in seawater and the mass of the seawater. Practical salinity is the ratio of the electrical conductivity of a seawater sample to that of a standard potassium chloride (KCl) solution at the same temperature and pressure. Read MANTECH’s salinity method abstract here.

- What is Turbidity?

Turbidity is defined as the amount of suspended particles in a solution, measured in nephelometric turbidity units (NTU). It is used as a general indicator of the quality of water, along with colour and odour. The US EPA has a maximum contaminant level (MCL) of 5 NTU for drinking and wastewaters. Read MANTECH’s turbidity method abstract here.

- What is oxidation reduction potential (redox)?

Oxidation-reduction potential (ORP), also known as redox potential, refers to the capacity of a solution to oxidize (accept electrons) or reduce (donate electrons). ORP sensors work by measuring the voltage across a circuit formed between the indicator and reference electrodes. When an ORP electrode is placed in a solution containing oxidizing or reducing agents, electrons are transferred back and forth on the measuring surface, generating an electrical potential. Common applications for the ORP method include monitoring water chlorination processes for water disinfection, distinguishing between oxidizers and reducers present in wastewater, and metal screening. Read MANTECH’s redox potential method abstract here.

- What are Nitrates?

Nitrates (NO3) are formed from the two most common elements on earth, nitrogen and oxygen. Their presence in soils comes from nitrogen-fixing bacteria in the soil, decay of organic matter, industrial effluents, human sewage, and livestock manure. Nitrates are a serious problem since they do not evaporate, and hence remain dissolved and accumulate in the groundwater. Read MANTECH’s nitrate method abstract here.

- What is alkalinity?

Alkalinity refers to the capability of water to neutralize acid, also known as an expression of buffering capacity. A buffer is a solution to which an acid can be added without changing the concentration of available H+ ions (without changing the pH) appreciably. In other words, a buffer absorbs the excess H+ ions and protects the water body from fluctuations in pH. Read MANTECH’s alkalinity method abstract here.

- What is pH?

The potential of hydrogen (pH) is a measure of hydrogen ion (H+) concentration. Solutions with a high concentration of H+ ions have a low pH (acidic). Solutions with a low concentration of H+ ions have a high pH (alkaline). Read MANTECH’s pH method abstract here.

- What is a titration?

Titration uses a solution of known concentration to determine the concentration of an unknown solution. The titrant (the solution with a known concentration) is added from a buret to a known quantity of the analyte (the solution with an unknown concentration) until the reaction is complete. Knowing the volume of titrant added allows the concentration of the unknown solution to be determined.

- What is conductivity?

Conductivity, also known as electrical conductivity, is used to measure the concentration of dissolved solids which have been ionized in a polar solution such as water. Read MANTECH’s conductivity method abstract here.

- How many cables and what kind are required to connect and MT30 system to the computer?

MANTECH Pro Software requires at least 4 USB cables on the computer. This is not including the computer’s mouse or keyboard. When required, the system will include a 4-port USB hub. Please see Technical Bulletin 2023-012 for a complete list of the minimum computer requirements.

- How can I determine true color or filtered color using a turbidity measurement instead of filtering the sample?

True or Filtered Color is determined by filtering samples through a 0.45 micron filter before analyzing the sample in a spectrophotometer. When running large amounts of samples on automated systems, this filtering can become time consuming and heavy on resource usage. An alternative method (Bennett and Drikas 1993) for determining true color without filtering has been established, through the use of a turbidity meter to quantify the contribution from solids present in the sample. MANTECH can easily combine automated Turbidity and Color analysis on one system, determined from one sample, to provide True Color results without the need to filter.

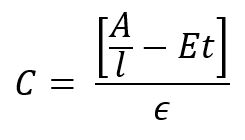

The determination is as follows:

C – Color result (Cu)

A – sample absorbance (Abs)

l – cell path length (cm)

E – scattering coefficient estimated at 0.00264 NTU-1 cm-1

t – turbidity (NTU)

ϵ – color absorptivity coefficient determined through calibration (Cu-1 cm-1)

- Do I require a USB-connected Thermistor for Temperature Compensation on my MANTECH Pro system?

- What is the weight and dimensions for AM400 autosamplers?

Autosampler Model Dimensions in Inches Dimensions in Centimeters Crate ONLY (in lbs) Crate ONLY (in kg) Weight Estimates with System & Accessories (in lbs)* Weight Estimates with System & Accessories (in kg)* 401 24 x 33 x 29 62 x 85 x 74 75 34 200 91 402 36 x 33 x 29 91 x 85 x 74 91 41 250 113 403 47 x 33 x 29 122 x 85 x 74 107 49 275 125 404 59 x 33 x 29 151 x 85 x 74 118 54 300 136 405 71 x 33 x 29 183 x 85 x 74 130 59 325 148 *Actual weight may vary by actual system and accessories.

- What is ppm (mg/L) of chloride for different conductivity standards?

KCI -> K + Cl

uS mg/L KCL g/L KCl M KCL Cl- ppm 10 5.272469922 0.005272 7.07227E-05 2.507332215 100 52.72469922 0.052725 0.000707227 25.07332215 147 77.50530786 0.077505 0.001039624 36.85778356 1,143 745 0.745 0.009993119 354.286042 12,000 6326.963907 6.326964 0.084867251 3008.798658 - How do you calculate Total Suspended Solids (TSS) from Turbidity?

Total suspended solids (TSS) is an analysis method that requires filter, wet weighing, drying in an oven for a few hours, then dry weighing. This is typically not performed by treatment plants and even for labs it is time consuming.

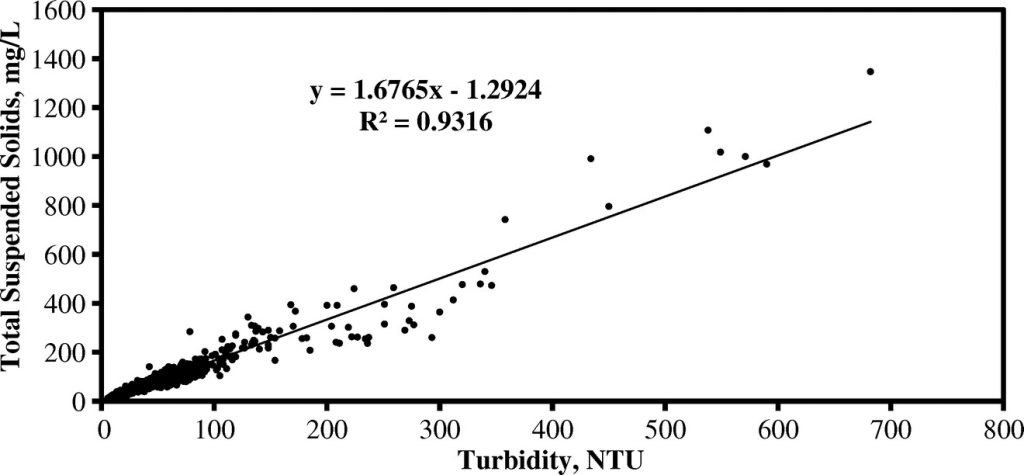

Turbidity is a surrogate measure for TSS that allows rapid automated analysis of batches of samples. A log-linear model shows strong positive correlation between TSS and turbidity (R2=0.9374) with a regression equation of [ln (TSS)=0.979 ln (Turb.) +0.574]. This model can be applied directly to turbidity analysis results to determine the TSS. MANTECH offers the MT30 analyzer for quick and easy “hands off” TSS analysis, only requiring 40mL of sample volume in batches up to hundreds of samples.

An example scatterplot showing a Turbidity:TSS correlation is provided below:

- What is the flow rate of the IntelliPump?

The MANTECH IntelliPump is a variable speed intelligent pump that is controlled in speed and direction by the MANTECH Pro software. The pump is set at a specific speed (1-100) and either 1/4″ or 1/8″ diameter tubing is used to achieve desired flow rates for various applications. The pump can then be calibrated by measuring liquid dispensed over a set period of time, and entering the value into the Hardware Configuration window of the software.

Pumps are assigned a default estimated flow rate based on the speed setting and tubing size, and this flow rate is used unless the end user decides to run a pump calibration. The estimated flow rates for various speed and tubing settings are provided below:

Tubing Diameter (inches) Speed Setting (%) Flow Rate (mL/min) 1/8” 6 (min) 1.9 10 17.88 20 56.22 30 92.27 40 126.02 50 157.47 60 186.63 70 213.49 80 239.05 90 260.32 98 (max) 276.48 1/4” 3 (min) 2.79 10 61.82 20 146.15 30 230.48 40 314.81 50 399.14 60 483.47 70 567.80 80 652.13 90 736.46 99 (max) 812.36 - What is the shelf life of MANTECH Turbidity Standards?

MANTECH Turbidity Standards have a one year shelf life from the date they are put in stock. The Expiry Date is always shown along with the lot number on the bottle labels.

- Why is there liquid leaking from my TitraSip system?

The presence of liquid beneath the Titrasip would be related to one of two things:

• Overflow from the vessel

• Leaks from the tubingGenerally speaking, the rinse pump for the Titrasip should be able to pump indefinitely with no risk of overflowing over the top of the glass vessel, and this is because of the top waste line connection. That top waste line should be able to drain liquid at the same rate that the pump delivers the rinse water, but to do this it relies on a clear line with no blockages, running downhill for its full length to the drain or waste container. Often when challenges arise with overflowing, it is due to part of the drain line not running downhill, or running horizontally for too long of a distance. Since it is all gravity-based draining, any place in the line where liquid can settle makes it more difficult for liquid to drain from the vessel. The same thing occurs when growth builds up inside the waste line restricting the flow path.

There is a quick way to test whether overflowing is the source of these challenges:

1. Remove the top lid from the Titrasip vessel (storing the pH electrode in storage solution)

2. Test the drain valve with the front switch and also drain any liquid that was in the vessel before

3. Watching the vessel, use the front switch on the Titrasip to turn on the Rinse pump. Keep your finger on the switch.

4. As the vessel fills, it should reach the top connection and start draining down the waste line. The liquid level may go above the connection for a brief second before the full draining starts, but it should not near the top of the glass vessel.

a. IF it continues filling past the top connection and the liquid reaches the top of the glass vessel, turn off the pump. This would confirm that there is a draining challenge leading to overflows.

b. IF the draining occurs properly, you should see the liquid level reach a stable level at the top connection, and the pump would be able to be left on indefinitely without overflowing. This would confirm there is NOT a draining challenge and it is likely a leak in the tubing. - What service and support options are available?

MANTECH offers options for on-site or remote assistance. With the customer’s permission, a MANTECH representative can remotely control operations, answers questions, resolve challenges and assist with any other requests. MANTECH offers on-site support with one of our Technical Representatives within 72 hours across Canada and the U.S. Our team of Technical Representatives will assist in installation, repairs, and preventative maintenance of your systems. Find your nearest Technical Representative here. For on-site services outside of Canada and the U.S., please contact your distributor here.

- Do you offer barcoding options?

Yes, we do offer barcoding options. Barcoded labels will help you quickly identify results. Barcoded options are available and additional hardware is required.

- What are the Dimensions and Space Requirements for MANTECH Systems?

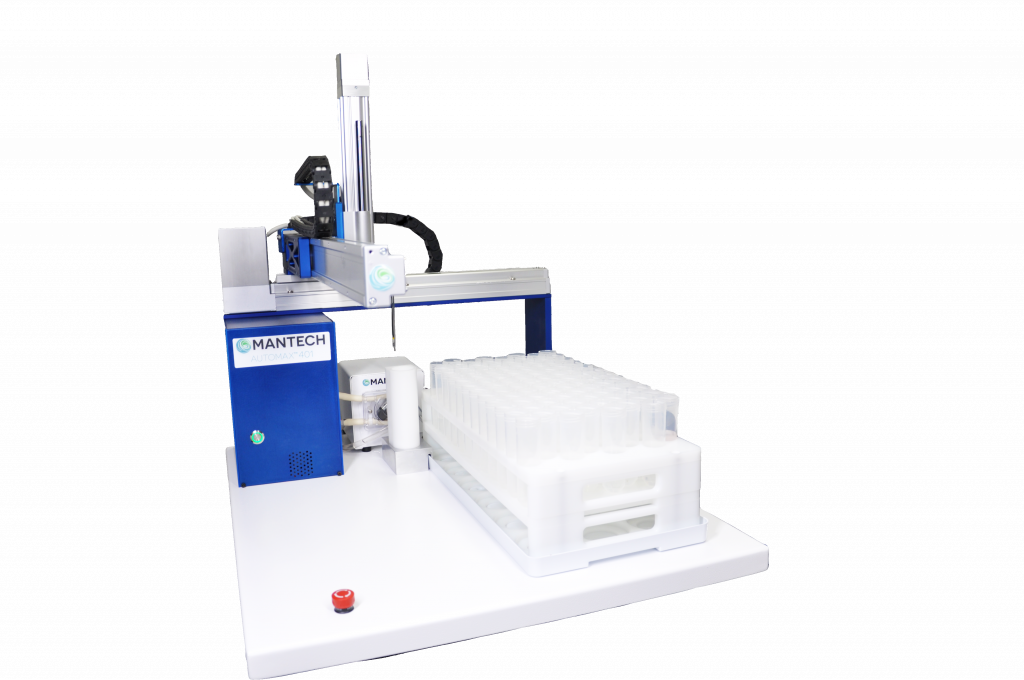

The measurements listed below are based on standard MT-Series systems. Measurements and pictures include the MANTECH System Organizer (MSO), where applicable. System controllers and computer monitors are not included in the dimensions described below.

AM400 Only Configuration (No MSO):

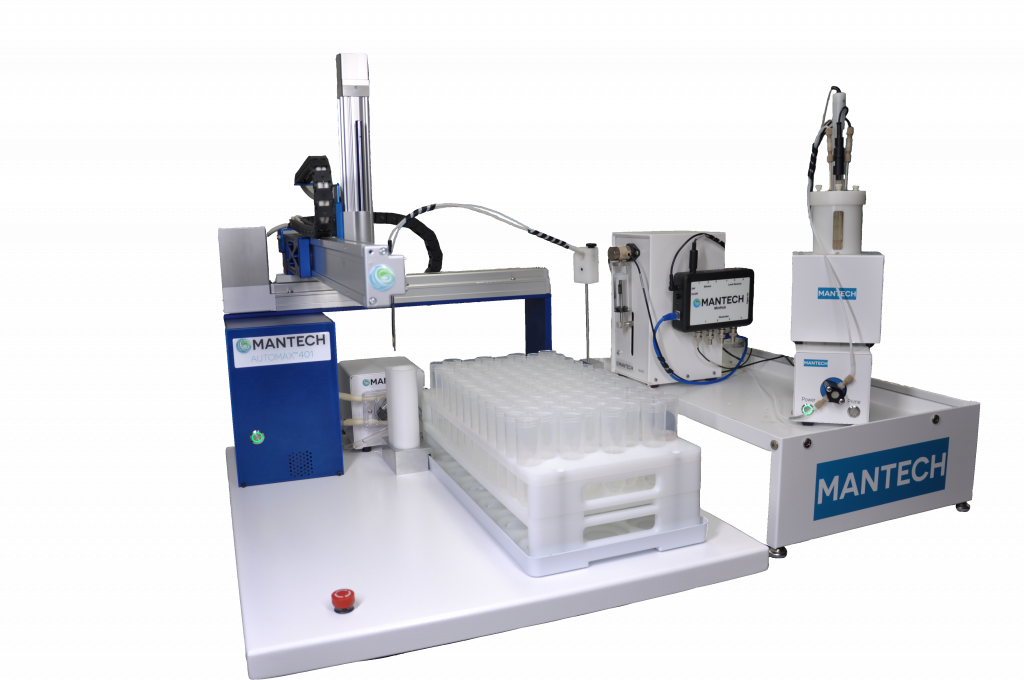

AutoMax401 AutoMax402 AutoMax403 AutoMax404 AutoMax405 W 24” / 62cm 36” / 92cm 48″ / 122cm 60″ / 152cm 72” / 182cm D 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm H 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm Example MANTECH system with AM400 Only Configuration

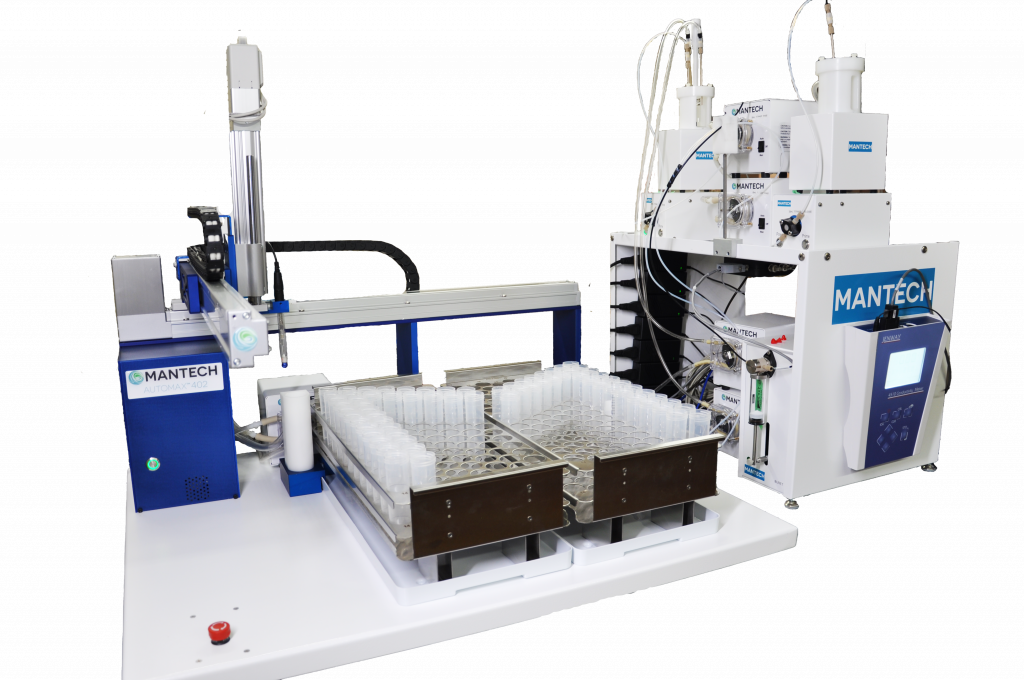

Tall Side MSO Configuration (Recommended Configuration):

AutoMax401 AutoMax402 AutoMax403 AutoMax404 AutoMax405 W 42” / 108cm 54” / 138cm 66” / 168cm 78” / 198cm 90” / 228cm D 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm H 40” / 102cm 40” / 102cm 40” / 102cm 40” / 102cm 40” / 102cm Example MANTECH system with tall side MSO

Short Side MSO Configuration:

AutoMax401 AutoMax402 AutoMax403 AutoMax404 AutoMax405 W 42” / 108cm 54” / 138cm 66” / 168cm 78” / 198cm 90” / 228cm D 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm H 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm Example MANTECH system with short side MSO

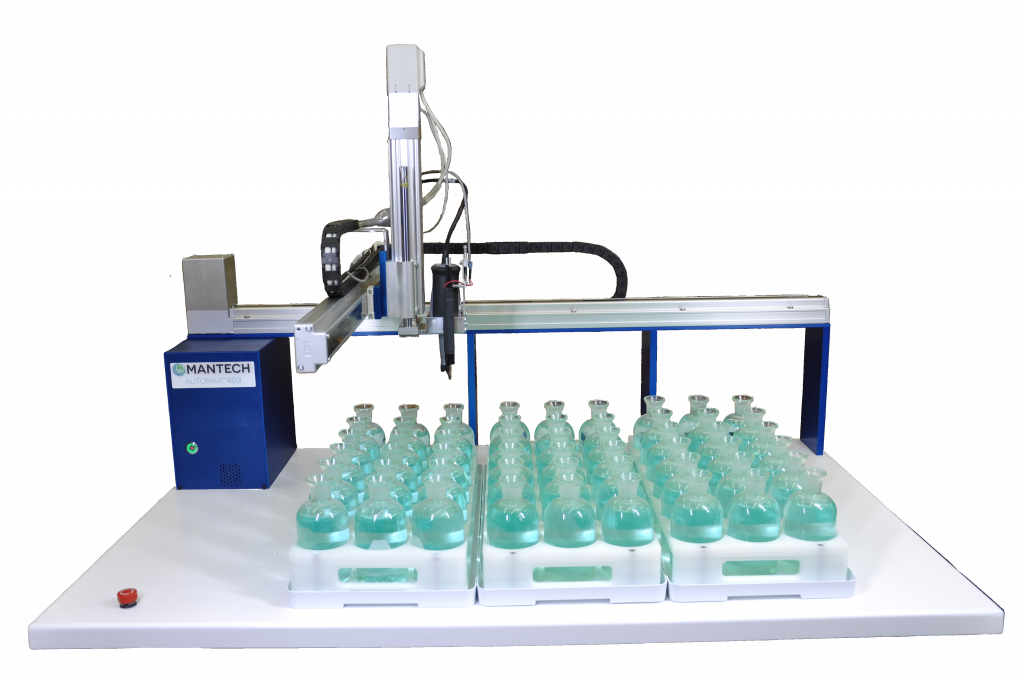

AM400 Series BOD Systems (No MSO):

AutoMax401 AutoMax402 AutoMax403 AutoMax404 AutoMax405 W 24” / 62cm 36” / 92cm 48” / 122cm 60” / 152cm 72” / 182cm D 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm 29” / 74cm H 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm 29.5” / 76cm Example MANTECH BOD system with no MSO (Note: modules are to be placed on sampler bed or next to the sampler)

- Why does my Turbidity method require a Multi-Line calibration?

The MANTECH turbidity flow cell has specific optical properties which are different than the cuvettes which you would manually insert (for example the sealed standards for the 3-month calibration). The difference in optical properties between the flow cell cylinder and the cuvette can cause the relationship between actual NTU and expected NTU to be slightly non-linear. This is only applicable in the low end of the measuring range, typically < 1 NTU. To ensure the lowest detection limit possible, we use multi-line to accurately capture how the optical properties of the flow cell are different than the cuvettes.

- Why does my Fluoride method require a Multi-Line calibration?

The Fluoride method requires a multi-line calibration for the probe response at low levels. We have a Minimum Detection Limit for fluoride analysis of 0.01 ppm, and it is known that the slope for fluoride probes (and all ISE probes) deviates from the expected range and approaches zero as the fluoride concentration also approaches zero. We use multi-line to account for this changing slope at the low concentration levels to achieve the lowest detection limit possible. The ability to switch to linear without an effect on results depends on the range you are measuring.

- What are the part numbers and BOMs for the QC Racks?

Refer to the following table for updated part numbers and BOMs for the QC Racks.

Part Number Description PC-AM400-QC-50-24 Rack for QC or calibration locations using 50ml tubes. Allows for 24 positions. Can be used with all AM400 series autosamplers PC-AM400-QC-50-36 Rack for QC or calibration locations using 50ml tubes. Allows for 36 positions. Can be used with AM402, AM403, AM404, and AM405 autosamplers PC-AM400-QC-50-48 Rack for QC or calibration locations using 50ml tubes. Allows for 48 positions. Can be used with AM403, AM404, and AM405 autosamplers PC-AM400-QC-120-9 Rack for QC or calibration locations using 120ml cups. Allows for 9 positions. Can be used with all AM400 series autosamplers PC-AM400-QC-120-18 Rack for QC or calibration locations using 120ml cups. Allows for 18 positions. Can be used with AM402, AM403, AM404, and AM405 autosamplers PC-AM400-QC-120-27 Rack for QC or calibration locations using 120ml cups. Allows for 27 positions. Can be used with AM403, AM404, and AM405 autosamplers PC-AM400-QC-125-7 Rack for QC or calibration locations using 125ml cups. Allows for 7 positions. Can be used with all AM400 series autosamplers PC-AM400-QC-120-18 Rack for QC or calibration locations using 125ml cups. Allows for 14 positions. Can be used with AM402, AM403, AM404, and AM405 autosamplers PC-AM400-QC-120-27 Rack for QC or calibration locations using 125ml cups. Allows for 21 positions. Can be used with AM403, AM404, and AM405 autosamplers - I'm having troubles connecting my MiniHub. Is there a way to reset it to factory settings?

Yes! Follow our short tutorial on resetting your MiniHub to factory settings if it does not connect to the IP address of 192.168.1.10

Follow our updated technical bulletin here.

- What are QC racks and what capacities are available?

Available as an optional add-on, MANTECH offers a separate fixed rack located in front of the rinse station for placing calibration solution, storage solution(s), and QC checks instead of limiting the sample rack capacity. Capacities range based on autosampler and tube/cup size.

MT Series QC Rack Options

Part Numbers # of Positions Tube/Cup Size Autosampler Model PC-AM400-QC-50-24 24 50mL All AM400 Models PC-AM400-QC-50-36 36 50mL AM402, AM403, AM404, AM405 PC-AM400-QC-50-48 48 50mL AM403, AM404, AM405 PC-AM400-QC-120-9 9 120mL All AM400 Models PC-AM400-QC-120-18 18 120mL AM402, AM403, AM404, AM405 PC-AM400-QC-120-27 27 120mL AM403, AM404, AM405 PC-AM400-QC-125-7 7 125mL All AM400 Models PC-AM400-QC-125-14 14 125mL AM402, AM403, AM404, AM405 PC-AM400-QC-125-21 21 125mL AM403, AM404, AM405 BOD QC Rack Options

Part Numbers # of Positions Tube/Cup Size Autosampler Model PB-10265 3 300mL All AM400 Models - How to retrieve cell constant from consort conductivity meter?

The cell constant can be retrieved from the GLP report, directly found on the meter. Press CAL, then press the Down Arrow key to select GLP, and press OK. Press OK again on Show Report.

This will bring up the calibration information was the performed on the meter, including the cell constant value. You need to scroll down, using the Down Arrow key, to find the section called “Calibration”, where CC = Cell Constant (cm-1).

Note: Scrolling to the bottom will show the previous cell constant.

- How to add gran alkalinity method to MANTECH Pro?

Follow this step-by-step PDF on adding gran alkalinity method to MANTECH Pro Software.

- What information is required to automate multi-parameter and titration-based applications?

Automating multi-parameter and titration-based applications can greatly increase the efficiency and throughput of a laboratory. To automate these analyses, the following information is required:

- Which parameters are you looking to automate? Here is a list of parameters we offer?

- Are there any SOPs you can provide for the requested applications?

- How many samples do you run per day, week, batch, etc.

- Does your company run any BOD or COD testing?

- What are the Specifications for Ethernet and USB Hubs?

See below for hub specifications:

Specification Ethernet Hub USB Hub Ports 5 10 Power Input (AC) 100-240V 100-240V USB N/A USB 2.0 Driver Required No No Note: All hubs must be directly connected to power for all system installations. MANTECH does not recommend hubs acquiring power solely from the USB connection of the computer.

- How is DI water connected to MANTECH Analyzers for rinsing?

MANTECH Analyzers utilize peristaltic pumps for rinsing that can pull DI water either from a static reservoir or container located near the analyzer, or from a pressurized line connected directly to the rinse pump inlet.

IMPORTANT NOTE: If drawing DI water from a pressurized line, it is important that the pressure in the line does not exceed 20 psi. A pressure regulator valve can be installed between the line hookup and the rinse pump to reduce pressure below this limit.

- Why is it important to use Degassed DI Water for blanks and standards preparation when performing automated Turbidity analysis?

Any dilutions used to prepare turbidity standards, as well as blanks, must use degassed DI water. This is especially important for accurate low-end turbidity analysis, as there is often a positive bias due to bubbles introduced into the DI water solution when it is being produced/dispensed. This effect becomes more noticeable with lower NTU readings, and can cause challenges with performing an accurate calibration. To degas DI water, it must be dispensed into a very clean bottle or container, and left to sit for ~24h. You will see bubbles form on the side of the container as the water degasses. After degassing, it is ready to use for blanks and standards preparation.

- Why are my Turbidity QCs failing?

One of the most common reasons for Turbidity QCs failing is due to DI water that is not degassed being used for blanks and standards preparation.

Any dilutions used to prepare turbidity standards, as well as blanks, must use degassed DI water. This is especially important for accurate low-end turbidity analysis, as there is often a positive bias due to bubbles introduced into the DI water solution when it is being produced/dispensed. This effect becomes more noticeable with lower NTU readings, and can cause challenges with performing an accurate calibration. To degas DI water, it must be dispensed into a very clean bottle or container, and left to sit for ~24h. You will see bubbles form on the side of the container as the water degasses. After degassing, it is ready to use for blanks and standards preparation.

When your turbidity QCs are failing, it is best to do the following:

- Ensure you are using Degassed DI water

- If you do not have this, prepare by leaving DI water to sit for 24h in a very clean bottle or container

- Repeat the QCs with a freshly-prepared standard

- If still failing, perform a new multi-point calibration, followed by x3 blanks then the same QC standards, all with fresh solutions

- If still failing the above, remove the flow cell and clean it, and also perform a new meter calibration with the set of sealed cuvette standards

- Then, reinstall the flow cell and perform a new free calibration, then a new multi-point calibration, followed by x3 blanks then the same QC standards, all with fresh solutions

- Ensure you are using Degassed DI water

- Is PC-Titrate software compatible with Windows 10 or 11 OS?

As a result of major security updates Microsoft has implement, MANTECH cannot guarantee that PC-Titrate will operate if updates in Windows 10 or 11 Pro operating systems. PC-Titrate can operate in virtual mode however, this leaves the analyzer vulnerable to viruses (leading to corrupted databases) and could impact the software when Windows updates are performed. Here is some more information about everything you need to know before updating to Windows 10…read more. In 2021, MANTECH introduced a line of all new hardware and MANTECH Pro software designed for Windows 10 and 11 Pro OS – meaning that all security and OS updates can run concurrently.

There are a few different options to move forward:

Install PC-Titrate on Windows 10 computer (no guarantee).

Upgrade the software to MANTECH Pro software which is backwards compatible with most hardware.

Replace the entire system (all new hardware and software) with a trade-in discount for your existing system.To discuss your options, please contact our team here.

- What is measuring range of the Model C10 conductivity meter?

The measuring range is 0.000µS/cm to 2,000mS/cm. The supplied conductivity probe has the same measuring range. The conductivity has a built-in PT1000 temperature probe for automated temperature compensation.

- How many burets and pumps can be added to an analyzer?

All MT models can include up to 20 burets, 30 IntelliPeri™ pumps and 30 IntelliDose™ pumps.

- What methods of lime analysis can be automated?

MANTECH systems conform to Tex-600-J and Tex-406-A Part III. Automated titrations (and back titrations) utilizing hydrochloric acid, sodium hydroxide, and other solutions are automated via MANTECH’s high precision 100,000 step buret with pH measuring range from 0-14.

Tex-600-J includes sampling and testing of:

- hydrated lime

- quicklime

- commercial lime slurry

- carbide lime slurry

MT Series of Automated Environmental Titration and Multi-Parameter Analyzers

Save Space and Lower Your Cost Per Sample

Whether your laboratory requires a simple pH system or a system with eight parameters, MANTECH will deliver. We realize each laboratory is unique and as a result, our systems are tailor configured with off-the-shelf modules to meet your requirements for sample volume, parameters and sample size. Each system is fully automated by easy to use software and robust robotics.

Benefits of Automated Titration Equipment

-

Automates 32-720 samples in a single batch

-

Customizable user interface for simplified operation

-

IntelliRinse™ prevents cross contamination between samples

-

Eliminates potential for human error with automated pipetting using MANTECH’s Titrasip™

-

Non-destructive sample preparation allows for up to 5 parameters on a single sample

Applications

-

Water

-

Soil

-

Food & Beverage